At a recent AI meetup I talked to people from heavily regulated industries about AI coding tools like Claude Code. Conversations often follow the same direction. "I don't code, so why would I use this?" Fair question, but these tools aren't just for developers anymore. You talk to them. They build things for you. They're powerful exactly because you don't really need to be able to code. Then the second wall: "We can't use these tools. Compliance won't allow it." I get it, these are real constraints.

But here's the problem: these same people are often the AI leads for their companies. They're making decisions about AI strategy, evaluating vendors, and deciding what's possible. And they've never actually used the most interesting tools that AI has produced.

The Experience Gap

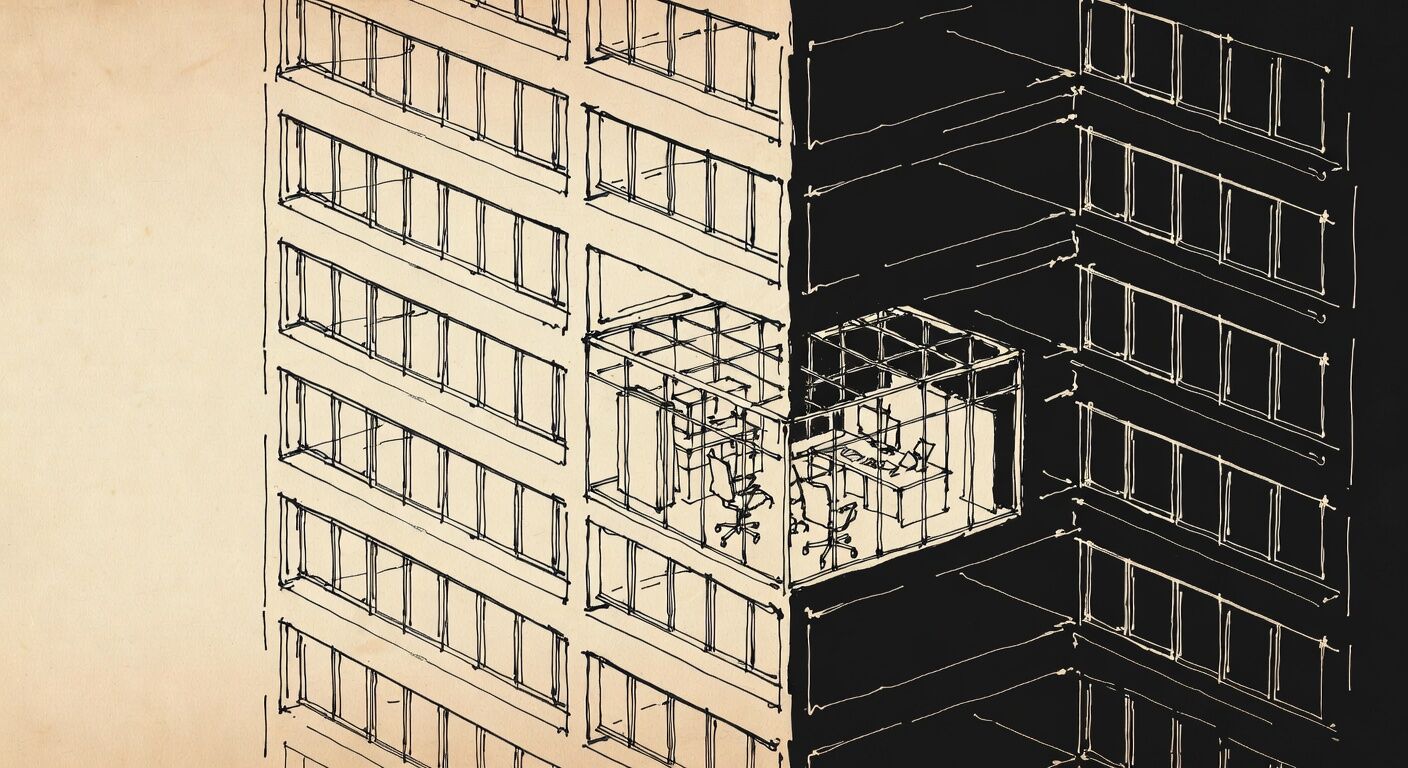

There's a difference between using a software product and using a tool that creates software. When you buy standard software, you're hoping it solves your problem. You're at the mercy of what the vendor built. When you use AI coding tools, you start with your problem. What do I need? What would solve this? Then you explore paths to get there. The tool adapts to you, not the other way around.

More importantly, you learn what's actually possible. What these tools and the models are good at. What they struggle with. What takes 30 seconds versus what takes 30 tries. You develop intuition about AI capabilities that no amount of vendor presentations can give you. Without this hands-on experience, you're making decisions blind. You can't properly evaluate what vendors are selling you. You can't judge whether you should build something in-house or buy it. You can't tell the difference between real AI capability and marketing hype.

The gap between AI power users and people who've never used these tools is already massive. Power users are thinking in complex workflows. They understand what's trivial and what's hard. They know when AI is the right tool and when it's not. People without this experience are still thinking in terms of buying the next software package.

This Isn't About Replacement

Some people think AI means they won't have to think anymore. That everything will just happen automatically. It's the opposite. Every company needs people who understand what AI can do and how to apply it. These people need to exist in engineering and in the business side. But if you don't give people the chance to learn these tools, how will they develop this understanding?

The Sandbox Solution

"We can't use these tools with our client data or in our production environment." Agreed. Don't do that. But this is a straightforward IT management problem. Create sandbox environments. Give people separate machines. Let them experiment with mock data.

Take a real use case from inside your company. Create a CSV with made-up data that has the same structure. If someone accidentally shares that outside your network, nothing happens. It's fake data. Your people learn how AI tools work. They understand what's possible. They can judge software vendors better. They can participate meaningfully in AI strategy discussions. They actually know what they're talking about. If you don't do this, your people stay where they are. They don't level up. They miss everything that's happening. They keep trying to solve new problems with old approaches.

Why SaaS Companies Are Worried

There's a reason software-as-a-service stocks are down. Companies are starting to realize they don't need to buy as much pre-built software anymore. If you understand AI coding tools, you can start building things that would have required buying enterprise software before. Not everything. Not always. But enough to change the calculation. You still might buy software. But you're making an informed choice, not a default one. You know what you could build versus what you should buy. You understand the trade-offs.

What This Actually Requires

Here's what happens if you don't address this: Your AI strategy team has no hands-on experience with AI tools. They rely entirely on vendor demos and sales pitches. They can't distinguish between real capability and marketing. They make expensive mistakes because they don't understand what's actually possible.

You need people who understand how things connect. People who know your business processes and can imagine how AI might fit in. People who've actually used these tools enough to know what's realistic. These people need two things: permission to experiment, and safe environments to do it in. Sandboxes aren't complicated. Separate machines aren't expensive. Mock data takes some work to create but not that much. What's expensive is staying behind while everyone else learns what's possible.