When you paste code into ChatGPT to debug an error, where does that code go? Who sees it? If it contains your company's proprietary logic, customer data, or unreleased features, does that matter?

Most people treat AI privacy as binary: either you care about privacy (and avoid AI tools entirely), or you don't care (and use whatever's convenient). But that framing misses something important. The real question isn't whether to use AI tools. It's who you're comfortable sharing your data with, and whether you're making that choice consciously.

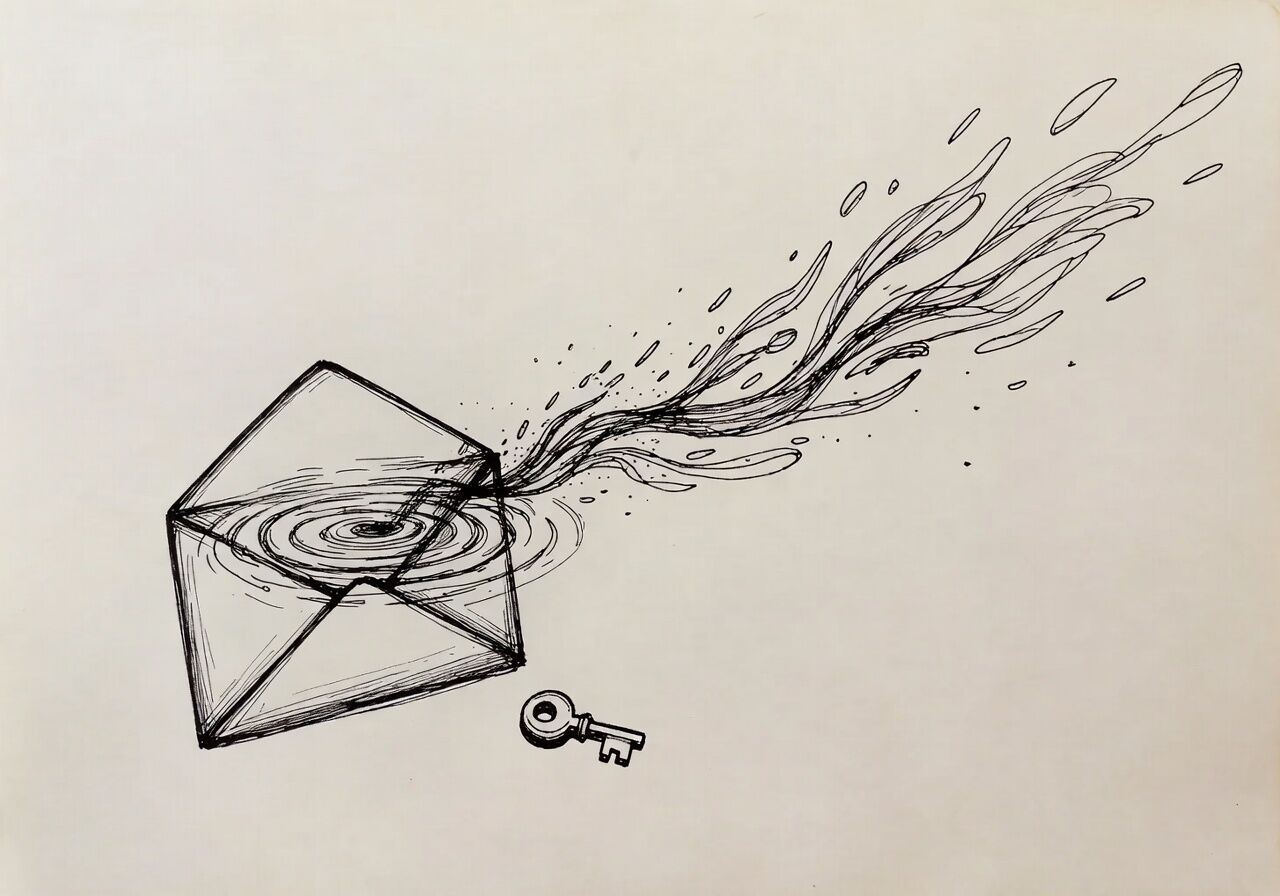

The Invisible Data Flow

You probably know your conversations with AI services get sent to company servers. But do you know what happens next?

Some services explicitly don't use your data for training. Others are vague about it. Some retain conversations indefinitely. Others delete them after 30 days. Some share data with third-party partners. Some don't.

The specifics vary wildly between providers, and they change. A service that didn't use your data for training last year might have updated its terms. That free tier you're using might have different privacy rules than the paid business tier.

Most people don't check. They just accept the defaults and assume someone else has thought about this.

What "Private Enough" Actually Means

Here's what's interesting: not all data needs the same level of protection.

A prompt about formatting a CSV file? Probably fine on any major AI service. Your company's quarterly financial strategy draft? That deserves more scrutiny. A conversation about career challenges that names your colleagues? You might care who sees that.

The spectrum looks something like this:

Public knowledge and generic tasks → Use whatever's convenient

Work-related but not sensitive → Check if the service uses your data for training

Confidential business information → Consider local models or services with strong privacy guarantees

Legally protected data → Know your compliance requirements and verify the service meets them

The problem is that most people treat everything the same way. They either share everything with cloud services or avoid AI entirely because "privacy concerns." Both approaches miss the nuance.

The Local Alternative You Might Not Know About

Running AI models locally on your computer means your data never leaves your machine. No servers, no third parties, no terms of service to parse. Complete control.

A few years ago, local models were too weak to be useful. That's changing. Not for everything, but for many common tasks, local models are becoming genuinely capable.

There's a trade-off, of course. Local models are typically less powerful than the largest cloud-based ones. They require more setup. They won't work for every use case.

But for sensitive work, the trade-off might be worth it. And as local models improve, that calculation shifts.

The Question You Should Be Asking

When you use an AI tool, you're making a choice about who to trust with your data. Sometimes that choice is conscious and informed. Often it's not.

The question isn't "Should I use AI tools?" It's "Do I understand who has access to what I'm sharing, and am I comfortable with that?"

If you're using ChatGPT to debug code that contains your company's core business logic, that's a choice. Maybe it's the right choice for your situation. But it should be an intentional one, not just the default because ChatGPT is the first tool you thought of.

What Changed Recently

The AI privacy landscape is shifting. Major providers now offer business tiers with stronger privacy guarantees. Local models that actually work are becoming available. Privacy regulations are getting more specific about AI tools.

But the defaults haven't changed much. If you don't actively choose a privacy-focused option, you're probably not getting one.

The opportunity here is to match your tool choice to your data sensitivity. Not everything needs maximum privacy. But sensitive work deserves more consideration than "I'll use whichever AI tool is already open in my browser."

Privacy in AI isn't about avoiding these tools. It's about using them with your eyes open.